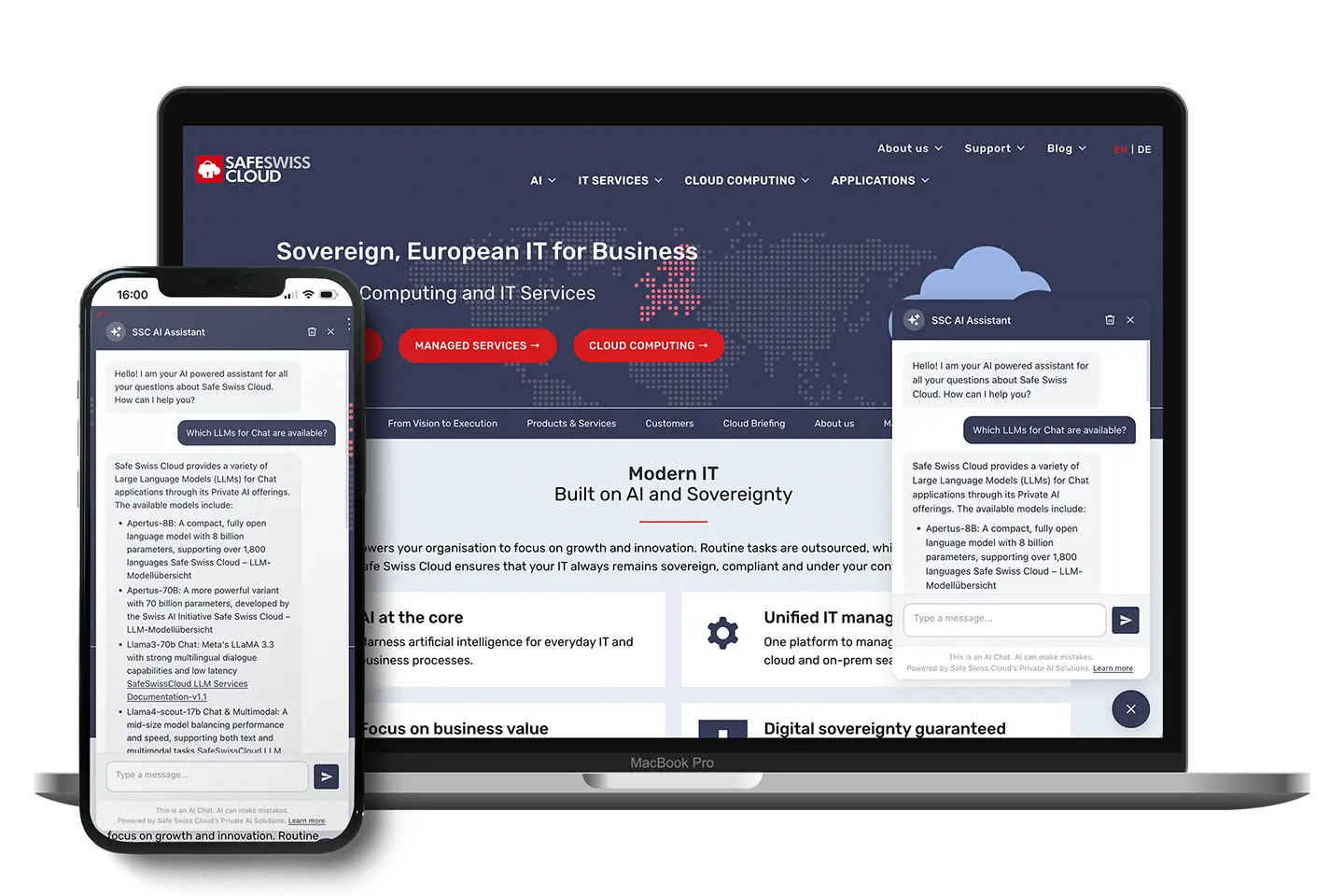

The project had a clear requirement: an AI chatbot for a WordPress website that runs exclusively on Swiss infrastructure. The AI provider is Safe Swiss Cloud Private AI — an OpenAI-compatible endpoint operated on servers in Switzerland. The chatbot should engage with the website’s content, serve multilingual visitors correctly, and integrate seamlessly with WordPress.

The problem with “off-the-shelf” chatbot solutions

Most ready-made chatbot solutions in the WordPress plugin repository are a black hole when it comes to data and, while they typically work quickly, they only support the APIs of the well-known large AI providers like OpenAI, Anthropic, or Google. On top of that, they are mostly inflexible. For companies with European customers and GDPR obligations, that’s already problematic. And for a Swiss cloud company that stands for “your data stays in Switzerland”, such a solution is simply unworkable.

With Private AI, Safe Swiss Cloud operates its own AI service on Swiss infrastructure. Models run on servers in Switzerland, under Swiss data protection law, with no third-party transfers. What was missing was a WordPress plugin that integrates this service cleanly into an existing website — and not just “somehow works”, but actually engages with the site’s content.

The result — the WordPress plugin (wp-ai-chatbot, version 1.1.1) — is what I’m presenting here in terms of its architecture. For now, it continues to be developed as a proprietary plugin so that specific requirements can be modeled precisely and adapted for other projects and use cases — just get in touch if you’re interested.

Plugin architecture at a glance

wp-ai-chatbot/

├── wp-ai-chatbot.php # Entry point, constants, autoloaders

├── includes/

│ ├── Admin/

│ │ ├── Settings.php # Settings page (5 sections, Settings API)

│ │ └── Updater.php # Self-hosted auto-update via downloads.netzkundig.com

│ ├── Chat/

│ │ ├── AI_Adapter.php # Provider abstraction (SDK → direct API fallback)

│ │ ├── Frontend.php # Widget rendering & wp_localize_script

│ │ ├── Language_Detector.php # 3-layer language detection

│ │ └── REST_Controller.php # Public + admin REST endpoints

│ └── Content/

│ ├── Indexer.php # RAG: MySQL FULLTEXT + pgvector integration

│ ├── Vector_Search.php # Semantic search via PostgreSQL pgvector

│ └── Knowledge_Base.php # Private CPT for custom knowledge entries

└── assets/

├── css/chat-widget.css # CSS custom properties, typing effect

└── js/chat-widget.js # Vanilla JS, no framework, no build stepThere is no frontend build pipeline, no React. We use vanilla JavaScript, CSS custom properties, the WordPress-native REST API, and the Settings API. PHP 8.1+ and PSR-4 autoloading via spl_autoload_register are in use.

Provider abstraction: OpenAI-compatible interface as common denominator

The heart of it is AI_Adapter.php, which implements a 3-stage fallback:

1. WP AI Client SDK (ships with WordPress 7.0+)

↓ not available?

2. PHP AI Client SDK (via Composer)

↓ not available?

3. Direct wp_remote_post() call

(OpenAI / Anthropic / Google / Mistral / Custom endpoint)Safe Swiss Cloud Private AI is configured as a custom endpoint — a URL that implements the OpenAI Chat Completions API format (POST /v1/chat/completions). The plugin sends:

{

"model": "configured-model-name",

"messages": [

{ "role": "system", "content": "System instruction with RAG context" },

{ "role": "user", "content": "Previous question" },

{ "role": "assistant", "content": "Previous answer" },

{ "role": "user", "content": "Current question" }

],

"temperature": 0.7, // adjustable in Settings

"max_tokens": 1024 // adjustable in Settings

}The API key is stored AES-256-CBC-encrypted (WordPress auth salts as the key) and never appears in the frontend HTML or JavaScript.

RAG & knowledge base: how website content and private content enter the context

Alongside the automatically indexed website content, there’s a second channel for context knowledge: a private custom post type (wpaic_knowledge), maintained in the WordPress backend under the settings menu and not accessible to frontend visitors.

Entries are created with the standard WP editor (title + content) or imported via .txt file upload. Independently of the “Index Post Types” setting, they are always fully indexed and embedded — including pgvector embedding on save_post.

Important behavior: answers never cite these entries with a link. The content serves exclusively as context knowledge for more precise answers — internal FAQs, product or pricing details, support information — without making it publicly accessible. This is enforced both via the system prompt and via a JS-side filter rule in the Markdown renderer.

Indexing

Content is stored in {prefix}wpaic_index (MySQL) — split into ~500-word chunks with 50-word overlap. A MySQL FULLTEXT index sits on top of the table. Hooks:

save_post→ auto re-index of the affected postdelete_post/trashed_post→ auto removal from the index- Manual batch re-index via

POST /wpaic/v1/reindex(10 posts/batch, AJAX progress)

Hard limit: 300,000 characters per post. The FULLTEXT index is only built during a manual re-index, never on page load (FastCGI timeout prevention).

Retrieval pipeline

User query

│

▼

Language Detection

│

▼

Priority chunks (is_priority=1) → always in the context

│

▼

FULLTEXT search (language-preferring)

│ < 50 % of slots filled?

▼

Top up with chunks in other languages

│ pgvector hybrid mode active?

▼

Semantic results fill remaining slots

│

▼

Top-N chunks → system prompt

│

▼

AI generates answer with inline source linksFallback logic: if FULLTEXT returns no results (short queries or restricted hosting), the plugin automatically falls back to a LIKE search.

Semantic search: pgvector as an optional extension

The main reason for a separate PostgreSQL database: MySQL has no production-ready implementation for Approximate Nearest Neighbor (ANN) search over vectors. pgvector brings a vector(N) column type, the cosine distance operator (<=>), and IVFFlat / HNSW indexes.

The plugin creates automatically:

CREATE TABLE {prefix}wpaic_vectors (

id bigserial PRIMARY KEY,

chunk_id bigint NOT NULL UNIQUE,

post_id bigint NOT NULL,

embedding vector(N) NOT NULL,

indexed_at timestamp NOT NULL DEFAULT NOW()

);

CREATE INDEX ON {prefix}wpaic_vectors

USING ivfflat (embedding vector_cosine_ops) WITH (lists = 100);Connection through PHP pdo_pgsql — no PHP FFI, no native libraries, no compiled models. Works on shared hosting as long as pdo_pgsql is enabled.

Embedding provider is independent of the chat provider

Chat and embeddings are fully decoupled. Configurable combinations: e.g. Private AI for chat + OpenAI text-embedding-3-small for embeddings, or a custom embedding endpoint. Built-in dimensions:

| Model | Dimensions |

|---|---|

text-embedding-3-small (OpenAI) | 1536 |

text-embedding-004 (Google) | 768 |

mistral-embed | 1024 |

| Custom (OpenAI-compatible) | freely configurable |

Search modes

- Hybrid: FULLTEXT first, pgvector fills the remaining context slots

- Vector only: semantic search exclusively

- Graceful degradation: all vector methods return

[]/falsewhen PostgreSQL isn’t reachable → automatic FULLTEXT fallback, no error message

Multilingualism: 3-layer detection

User message

│

▼

Unicode script detection

(CJK, Cyrillic, Arabic, Hebrew, Thai, Devanagari)

│ Latin script?

▼

Stopword matching

(DE, EN, FR, ES, IT, NL, PT, PL)

│ uncertain?

▼

Browser locale (Accept-Language header)

│ not available?

▼

Plugin default languageThe language-preferring RAG search fills context slots first from content in the detected language. If fewer than half of the slots are filled, chunks from other languages are added — useful for partially translated sites.

WPML/Polylang integration: during indexing, wpml_permalink is applied so that stored URLs always include the language slug and the full parent-page hierarchy (e.g. /de/faqs/private-ai/slug/ instead of /slug/).

REST API

Public (rate-limited: 30 req/min per IP hash):

POST /wp-json/wpaic/v1/chat

GET /wp-json/wpaic/v1/statusRequest body for /chat:

{

"message": "Where is my data stored?",

"history": [...],

"locale": "en-GB"

}Response:

{

"success": true,

"reply": "Your data is stored exclusively on servers in Switzerland...",

"language": "en",

"sources": [

{ "title": "Privacy", "url": "https://example.com/en/privacy/" }

],

"provider": "custom"

}Admin (requires manage_options + nonce):

POST /wpaic/v1/reindex # Batch re-index (10 posts/batch)

POST /wpaic/v1/reindex-vectors # Embedding generation (10 chunks/batch)

POST /wpaic/v1/test-pg-connection

POST /wpaic/v1/initialize-pg

GET /wpaic/v1/vector-statusChat widget: vanilla JS, no framework

The widget renders in wp_footer. Configuration is passed in via wp_localize_script as a wpaicConfig object — API keys never appear in the frontend.

Features in JS:

- Typing effect for AI responses (~29 words/second, blinking block cursor via CSS)

- Session persistence via

sessionStorage(chat history persists across page navigation) - Markdown rendering (bold, italic, links, code) with XSS protection (only

http:///https://in links) - Language resolution for the welcome message:

<html lang>(WPML/Polylang) → browser language → plugin default

CSS custom properties for theming:

--wpaic-primary /* Primary color, configurable via Iris color picker */

--wpaic-radius /* Border radius */

--wpaic-font /* Font family */Security checklist

- API keys: AES-256-CBC, WordPress auth salts as the key, never in the frontend

- Rate limiting: 30 req/min per IP hash via WP transients

- Input:

sanitize_textarea_field, max 2000 characters per history message, max 10 messages - Admin endpoints: WP nonce +

manage_optionscapability check - Knowledge-base CPT (

wpaic_knowledge): not public, not linkable in responses

Custom endpoint: requirements for Private AI

The plugin communicates with any OpenAI-compatible endpoint. For Safe Swiss Cloud Private AI (or your own vLLM/Ollama endpoint), the minimum requirements are:

POST /v1/chat/completionswith the OpenAI request format- Response with

choices[0].message.content - HTTPS with a valid, publicly trusted certificate

- Timeout: 60 seconds

For embeddings (POST /v1/embeddings):

- Response with

data[0].embeddingas a float array - Fixed, configured dimension

Status and outlook

The plugin has been in production since March 2026 (v1.0.0), currently at v1.1.1. In the pipeline: a logging feature, speech-to-text via the Web Speech API (device-side, with no server-side processing), and a cleaner integration of the WordPress AI Client SDK once it’s available.

The plugin continues to be developed as a proprietary plugin. It’s straightforward to adapt for other projects and websites — just get in touch if you’re interested.